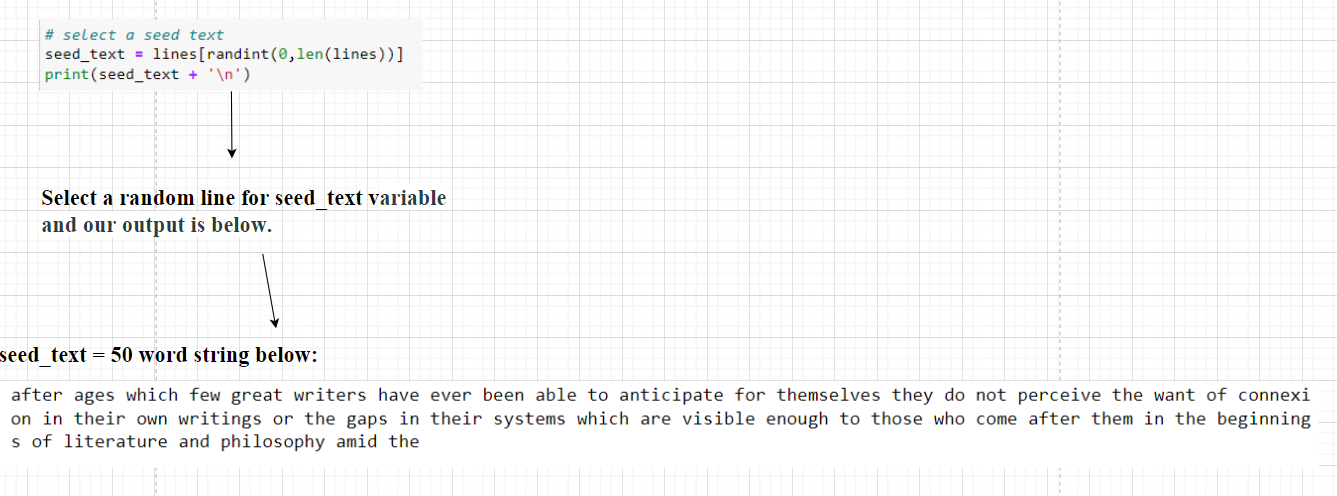

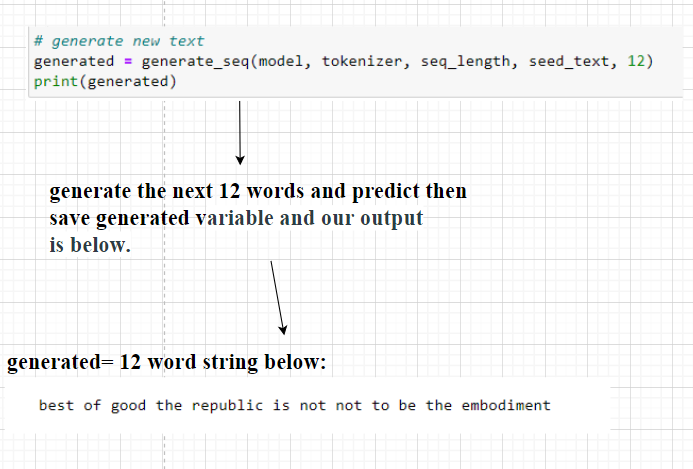

['the', 'project', 'gutenberg', 'ebook', 'of', 'the', 'republic', 'by', 'plato', 'this', 'ebook', 'is', 'for', 'the', 'use', 'of', 'anyone', 'anywhere', 'in', 'the', 'united', 'states', 'and', 'most', 'other', 'parts', 'of', 'the', 'world', 'at', 'no', 'cost', 'and', 'with', 'almost', 'no', 'restrictions', 'whatsoever', 'you', 'may', 'copy', 'it', 'give', 'it', 'away', 'or', 're', 'use', 'it', 'under', 'the', 'terms', 'of', 'the', 'project', 'gutenberg', 'license', 'included', 'with', 'this', 'ebook', 'or', 'online', 'at', 'if', 'you', 'are', 'not', 'located', 'in', 'the', 'united', 'states', 'you', 'will', 'have', 'to', 'check', 'the', 'laws', 'of', 'the', 'country', 'where', 'you', 'are', 'located', 'before', 'using', 'this', 'ebook', 'title', 'the', 'republic', 'author', 'plato', 'translator', 'b', 'jowett', 'release', 'date', 'october', 'ebook', 'most', 'recently', 'updated', 'september', 'language', 'english', 'produced', 'by', 'sue', 'asscher', 'and', 'david', 'widger', 'start', 'of', 'the', 'project', 'gutenberg', 'ebook', 'the', 'republic', 'the', 'republic', 'by', 'plato', 'translated', 'by', 'benjamin', 'jowett', 'note', 'see', 'also', 'the', 'republic', 'by', 'plato', 'jowett', 'ebook', 'contents', 'introduction', 'and', 'analysis', 'the', 'republic', 'of', 'plato', 'is', 'the', 'longest', 'of', 'his', 'works', 'with', 'the', 'exception', 'of', 'the', 'laws', 'and', 'is', 'certainly', 'the', 'greatest', 'of', 'them', 'there', 'are', 'nearer', 'approaches', 'to', 'modern', 'metaphysics', 'in', 'the', 'philebus', 'and', 'in', 'the', 'sophist', 'the', 'politicus', 'or', 'statesman', 'is', 'more', 'ideal', 'the', 'form', 'and', 'institutions', 'of', 'the', 'state', 'are', 'more', 'clearly', 'drawn']

Total Tokens: 21735

Unique Tokens: 3548